Embark on a journey to master the art of data-driven decision-making with our comprehensive guide, “Unveiling the Power of A/B Testing Platforms.” In today’s fiercely competitive landscape, understanding your audience and optimizing your strategies are paramount. This guide serves as your compass, navigating the complexities of A/B testing and illuminating the transformative potential of sophisticated A/B testing platforms. Discover how these platforms empower marketers, product managers, and business leaders to make informed choices, improve user experiences, and drive measurable results through rigorous experimentation.

This article provides an in-depth exploration of A/B testing, also known as split testing, and the vital role that A/B testing platforms play in the process. We will delve into the core principles, methodologies, and practical applications of A/B testing across various industries globally. Furthermore, we will analyze the key features and functionalities of leading A/B testing platforms, enabling you to select the ideal tool to enhance conversion rates, improve website performance, optimize marketing campaigns, and ultimately achieve your business objectives through data-driven insights and continuous improvement.

What is A/B Testing and Why is it Important?

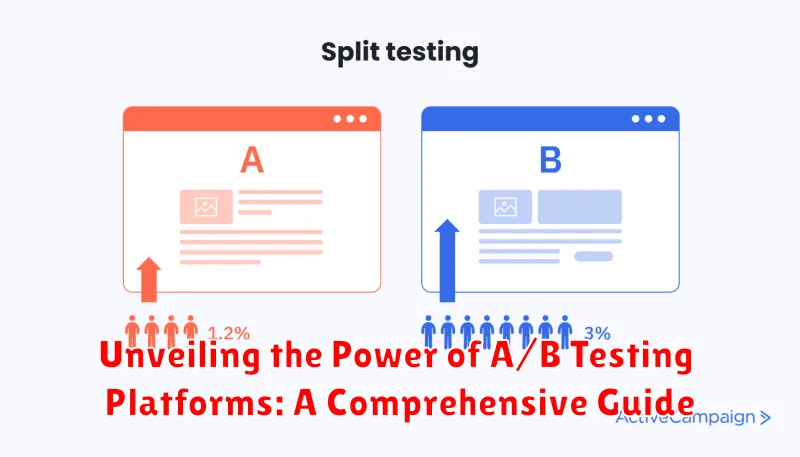

A/B testing, also known as split testing, is a methodology used to compare two versions of a webpage, app screen, or other marketing asset against each other to determine which one performs better. It involves randomly showing one version (A) to a segment of your audience and another version (B) to another segment, then analyzing which version achieves your desired outcome, such as higher click-through rates, increased conversions, or improved user engagement.

The importance of A/B testing lies in its ability to make data-driven decisions. Instead of relying on guesswork or intuition, A/B testing provides concrete evidence of what resonates with your audience. This allows you to:

- Optimize marketing campaigns: Improve the effectiveness of your online advertising and email marketing efforts.

- Enhance user experience: Create a more intuitive and engaging experience for your website visitors.

- Increase conversion rates: Drive more sales, leads, and sign-ups.

- Reduce bounce rates: Keep users on your site longer by providing a more compelling experience.

- Minimize risks: Test changes on a small segment of your audience before implementing them site-wide.

By continually testing and refining your strategies, A/B testing enables you to maximize your ROI and achieve your business goals more effectively.

Key Features to Look for in an A/B Testing Platform

Selecting the right A/B testing platform is crucial for optimizing your digital assets. Consider these essential features:

- Ease of Use: A user-friendly interface is paramount for efficient test creation and analysis. Look for intuitive dashboards and drag-and-drop functionality.

- Robust Reporting: The platform should provide comprehensive data visualizations, statistical significance calculations, and detailed reports to accurately assess test performance.

- Segmentation Capabilities: The ability to segment your audience based on demographics, behavior, or other criteria allows for more targeted and insightful testing.

- Integration Options: Ensure the platform seamlessly integrates with your existing analytics, marketing automation, and CRM tools for a unified workflow.

- Personalization Features: Advanced platforms offer personalization capabilities that allow you to deliver tailored experiences based on A/B test results.

- Mobile Optimization: Verify the platform supports testing on mobile devices and provides insights into mobile user behavior.

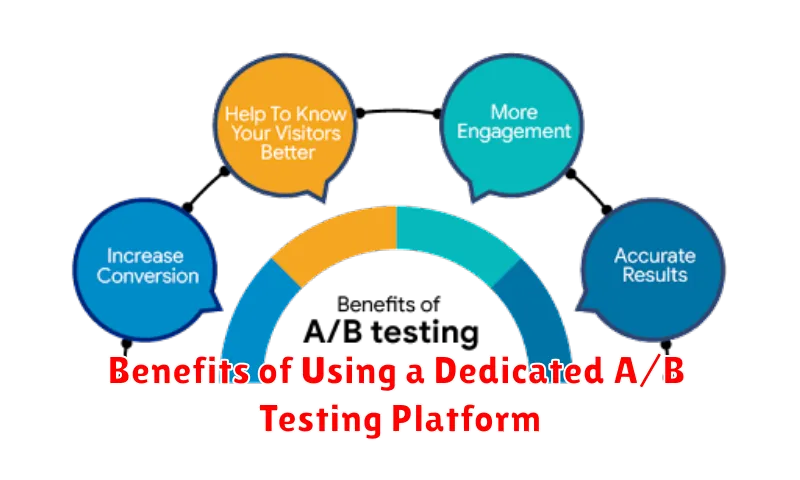

Benefits of Using a Dedicated A/B Testing Platform

Employing a dedicated A/B testing platform offers several distinct advantages over manual or ad-hoc testing methods. These platforms are specifically designed to streamline the experimentation process, leading to more efficient and impactful results.

One key benefit is enhanced accuracy. Dedicated platforms often incorporate sophisticated statistical analysis tools to ensure that the results obtained are statistically significant, minimizing the risk of drawing incorrect conclusions based on random variations.

Furthermore, these platforms provide improved efficiency through automation. Tasks such as traffic allocation, data collection, and report generation are automated, freeing up valuable time for marketing teams to focus on strategy and optimization.

Dedicated platforms also offer superior scalability. They can easily handle a large number of tests and variations, making them suitable for businesses of all sizes. This scalability ensures that you can continuously optimize your website or application as your needs evolve.

Finally, many platforms provide advanced features like personalization and segmentation, allowing you to tailor your experiments to specific user groups for more targeted and effective optimization.

How to Implement A/B Testing Effectively

Implementing A/B testing effectively requires a structured approach. First, define a clear hypothesis. This involves identifying a specific problem or opportunity on your website or app that you want to improve. For example, “Changing the call-to-action button color from blue to green will increase click-through rates.”

Next, prioritize your tests based on potential impact and ease of implementation. Focus on tests that are likely to yield significant results with minimal effort. After selecting a test, define your key performance indicators (KPIs). These are the metrics you will use to measure the success of your variations. Common KPIs include conversion rates, click-through rates, bounce rates, and revenue per user.

Finally, ensure proper test setup. This includes segmenting your audience if necessary, setting a sufficient sample size, and running the test for an appropriate duration to achieve statistical significance. It is important to utilize your chosen A/B testing platform’s features to accurately track and analyze the results.

Common Pitfalls to Avoid During A/B Testing

While A/B testing is a powerful tool, several common pitfalls can compromise the validity and effectiveness of your results. Avoiding these mistakes is crucial for drawing accurate conclusions and optimizing your strategies.

- Testing Too Many Elements Simultaneously: Changing multiple elements at once makes it impossible to determine which change caused the observed effect. Focus on testing one variable at a time for clear insights.

- Insufficient Sample Size: Running tests with too little data can lead to statistically insignificant results. Ensure you have a large enough sample size to achieve statistical power and confidence.

- Ignoring Statistical Significance: Concluding tests prematurely or without proper statistical analysis can lead to incorrect decisions. Always verify the statistical significance of your results before implementing changes.

- Lack of a Clear Hypothesis: Without a well-defined hypothesis, your tests lack direction and purpose. Formulate a clear hypothesis about what you expect to happen and why.

- Not Segmenting Your Audience: Treating all users the same can mask important differences in behavior. Segment your audience based on demographics, behavior, or other relevant factors to uncover more nuanced insights.

- Short Testing Durations: Running tests for too short a period can result in misleading results due to day-of-week effects or other short-term fluctuations. Allow enough time for the test to capture a complete range of user behavior.

Integrating Your A/B Testing Platform with Other Marketing Tools

Seamless integration of your A/B testing platform with other marketing tools is crucial for maximizing the impact of your optimization efforts. This allows for a holistic view of customer behavior and a more personalized user experience.

Key Integrations

- Customer Relationship Management (CRM): Integrate with your CRM to segment users based on their attributes and tailor experiments to specific customer groups.

- Marketing Automation Platforms: Connect with marketing automation platforms to trigger personalized campaigns based on A/B test results.

- Analytics Platforms: Link with analytics platforms like Google Analytics to gain deeper insights into user behavior and measure the impact of your experiments on key metrics.

- Email Marketing Platforms: Integrate with email marketing platforms to A/B test different email subject lines, content, and calls to action.

By integrating your A/B testing platform, you can create a closed-loop system where data flows seamlessly between different tools, leading to more informed decision-making and improved marketing performance.

A/B Testing Platform Pricing and ROI

Understanding the pricing structures and potential return on investment (ROI) is crucial when selecting an A/B testing platform. Pricing models vary, often depending on factors like the number of monthly active users (MAU), the number of tests you can run concurrently, and the level of support and features included.

Pricing Models

Common pricing models include:

- Freemium: Offers basic features for free, with paid upgrades for additional functionality.

- Subscription-based: Charges a recurring fee (monthly or annually) for access to the platform.

- Usage-based: Prices based on the number of experiments run or the volume of traffic tested.

- Enterprise: Customized pricing plans for large organizations with specific needs.

Calculating ROI

To determine the ROI of an A/B testing platform, consider the potential increase in conversion rates, revenue, and customer lifetime value (CLTV) that can be achieved through optimized website or app experiences. Compare these gains to the cost of the platform and the resources required to implement and manage A/B testing effectively. Prioritize platforms that offer transparent pricing and a clear path to demonstrable ROI.

Examples of Successful A/B Testing Campaigns

Numerous companies have leveraged A/B testing platforms to achieve significant improvements in key performance indicators (KPIs). Here are a few compelling examples:

Case Study 1: Optimizing Call-to-Action Buttons

A leading e-commerce retailer conducted an A/B test on their product pages, altering the color and wording of their primary call-to-action (CTA) button. The original button, a standard blue with the text “Add to Cart,” was tested against a green button with the text “Buy Now.” The green “Buy Now” button resulted in a 21% increase in click-through rates and a corresponding boost in sales.

Case Study 2: Improving Headline Copy

A subscription-based software company experimented with different headline variations on their landing page. They tested two headlines: “Start Your Free Trial Today” versus “Unlock Your Potential with [Software Name].” The latter headline, emphasizing value proposition, increased sign-up conversions by 15%. This simple change had a significant impact on lead generation.

Case Study 3: Streamlining Form Fields

An online education provider analyzed its registration form and hypothesized that the number of fields was deterring potential students. They conducted an A/B test, reducing the number of required fields from seven to four. The simplified form resulted in a 34% increase in form submissions, demonstrating the importance of user experience.

The Future of A/B Testing: Trends and Innovations

The realm of A/B testing is continually evolving, driven by advancements in technology and shifts in user behavior. Several key trends are shaping its future trajectory.

Personalization at Scale: Expect to see more sophisticated personalization techniques integrated into A/B testing platforms. This involves leveraging machine learning to dynamically adjust experiences for individual users based on their unique characteristics and preferences, moving beyond basic segmentation.

AI-Powered Experimentation: Artificial intelligence and machine learning are playing an increasingly important role in automating and optimizing the A/B testing process. This includes using AI to identify optimal test variations, predict outcomes, and even generate new hypotheses. Platforms are beginning to use machine learning to prioritize which tests to run.

Multi-Armed Bandit Testing: An increasing shift toward multi-armed bandit testing, where traffic is dynamically allocated to better-performing variations in real-time, allowing for faster optimization and reduced opportunity cost.

Focus on Qualitative Data: There’s a growing emphasis on incorporating qualitative data, such as user feedback and session recordings, to gain a deeper understanding of why certain variations perform better than others. This human insight complements quantitative data, leading to more informed decisions.